Semantic Kernel

Entry

Created: 10 Jan 2026

Updated: 11 Jan 2026

The Functional Architecture of Semantic Kernel

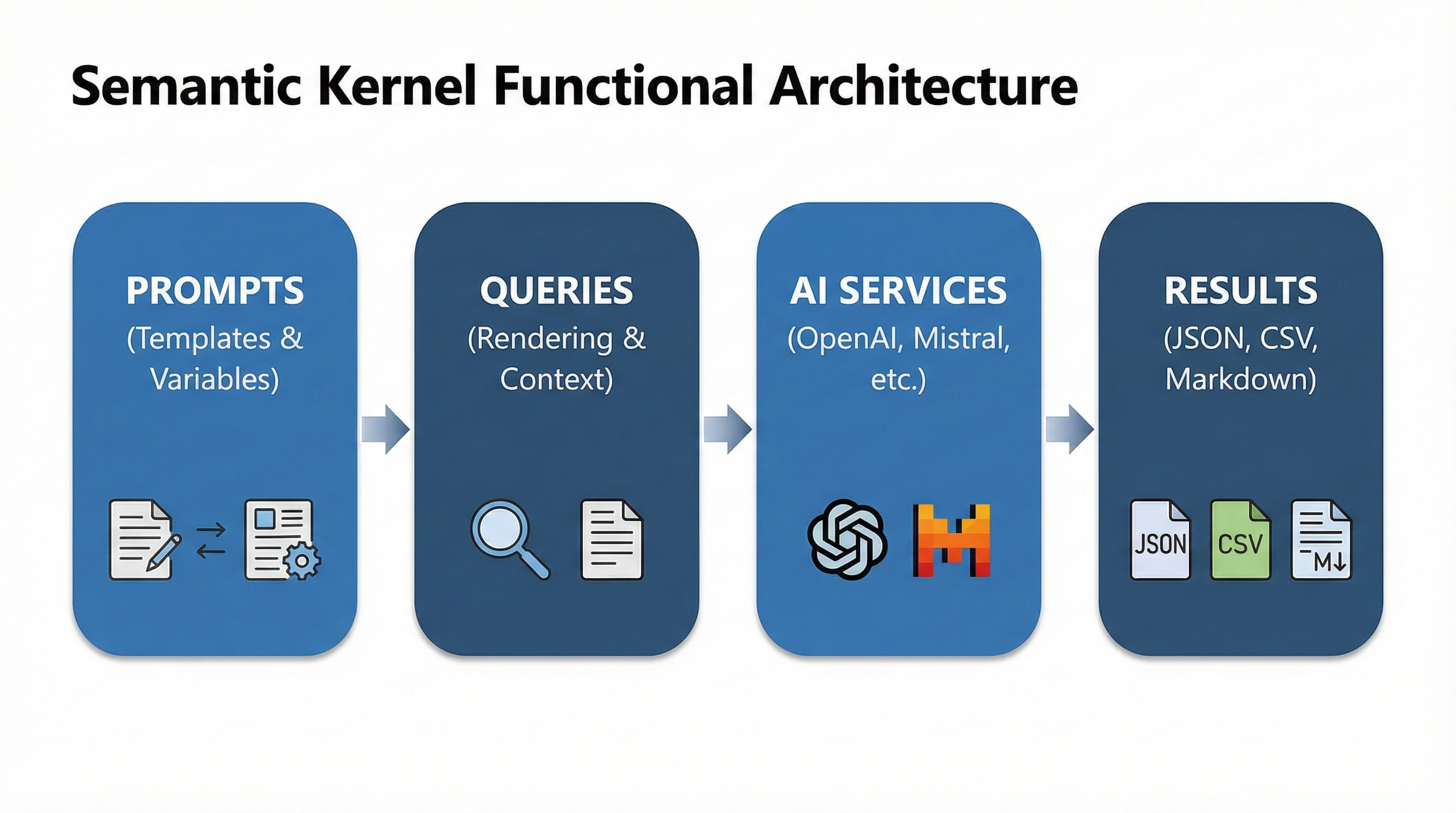

The power of Semantic Kernel lies in its ability to transform raw intent into structured action. It achieves this through a high-performance pipeline consisting of four primary stages: Prompts, Queries, AI Services, and Results.

1. The Core: Managed Prompts

In Semantic Kernel, a prompt is more than just a string of text; it is a Semantic Function.

- Dynamic Templating: The Kernel uses a sophisticated templating engine that allows for variable injection and function nesting (e.g.,

{{$input}}or{{MyPlugin.MyFunction}}). - Prompt Engineering Support: Out of the box, the architecture is designed to support advanced techniques such as Chain-of-Thought (CoT), Few-Shot Learning, and Prompt Chaining, where the output of one prompt serves as the input for the next.

2. The Transformation: Query Rendering

Before an LLM can process an instruction, the Kernel must "render" the prompt into a Query. This stage is where the magic of orchestration happens:

- Context Injection: The Kernel automatically resolves placeholders by pulling data from the Kernel Memory (RAG) or the current Execution Context (e.g., user preferences or conversation history).

- Model Optimization: The rendering process is model-aware. It formats the query (e.g., ChatML for OpenAI or specific templates for Llama/Mistral) to ensure the highest quality response from the target AI service.

3. The Engine: AI Services

Semantic Kernel acts as a high-level abstraction over the AI Services layer.

- Model Agnosticism: You can seamlessly switch between cloud-scale providers (Azure OpenAI, Google Gemini) and local models (hosted via Ollama or HuggingFace).

- Dynamic Selection: The architecture allows for "Router-style" logic, where the Kernel can choose a specific model based on the complexity, cost, or latency requirements of a specific task.

4. The Output: Structured Results

The final stage is the delivery of the Result. Semantic Kernel doesn't just return raw text; it provides a robust framework for handling diverse outputs:

- Multi-Format Support: Whether the AI returns Markdown, JSON, XML, or CSV, the Kernel can parse and validate these formats.

- Post-Processing Middleware: You can implement filters to clean the data, extract specific entities, or apply Responsible AI (RAI) guardrails to ensure the response is safe and compliant before it reaches the end user.