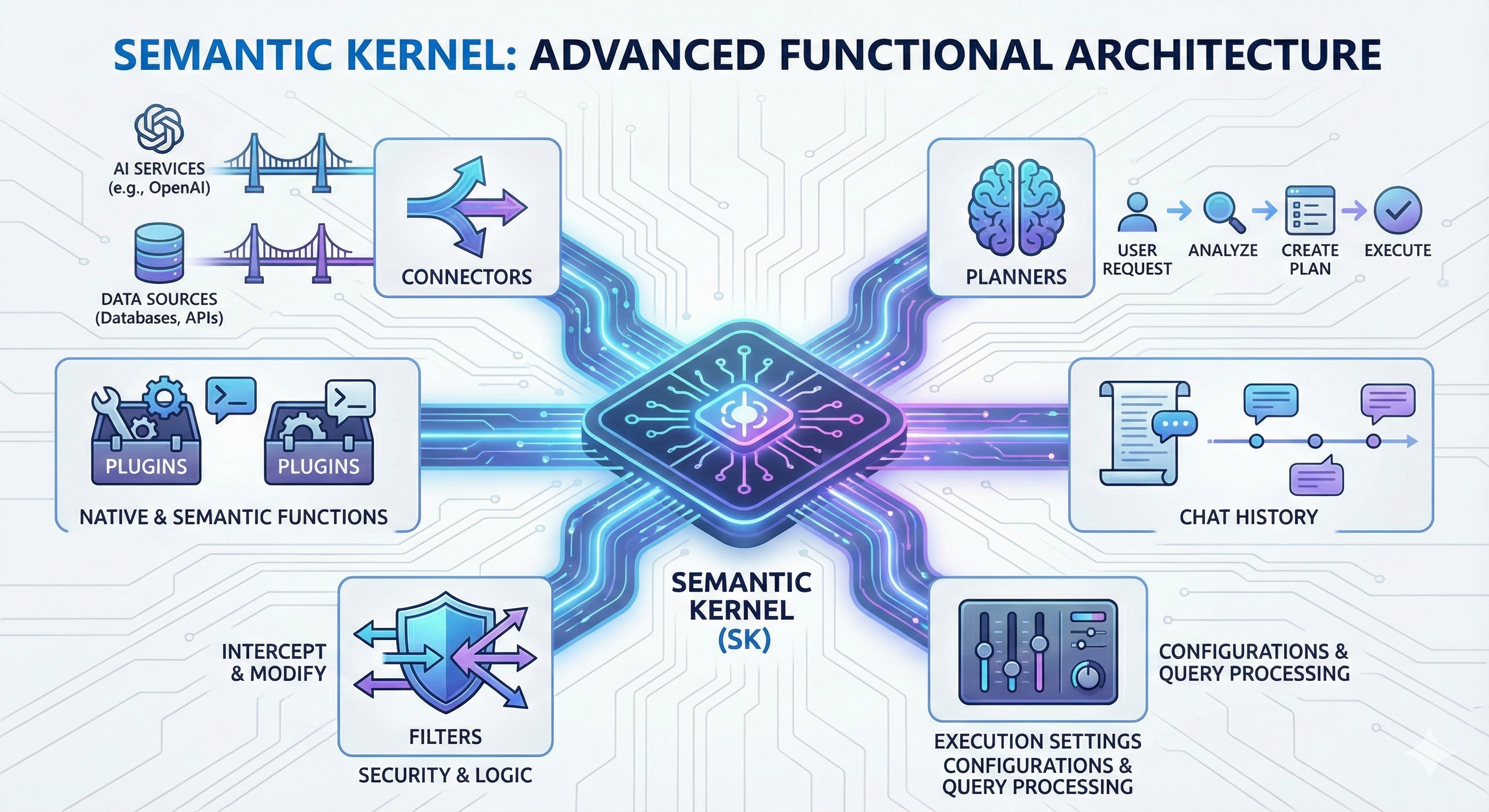

Advanced Functional Architecture in Semantic Kernel

As developers move beyond basic prompt-and-response patterns, understanding the internal orchestration of the Semantic Kernel (SK) becomes essential. To build truly robust, autonomous, and secure AI applications, one must master the advanced functional components that govern how the kernel interacts with the world.

Below is an exploration of the sophisticated architecture that transforms a standard Large Language Model (LLM) into a powerful AI agent.

1. Bridging the Gap: Connectors and Plugins

At its core, Semantic Kernel acts as a middleware. These two components represent the "hands" and "tools" of the kernel:

- Connectors: These are the specialized gateways. They allow the kernel to interface with external AI services (like OpenAI or Hugging Face) and various data sources (databases, vector stores, or third-party APIs). They abstract the underlying communication protocols, providing a unified way to fetch or send data.

- Plugins: Think of plugins as modular toolkits. A plugin is a collection of functions—either native (C# or Python code) or semantic (LLM prompts)—that extend the kernel's reach. By encapsulating related functions, plugins allow the AI to perform specific tasks, such as sending an email, querying a database, or performing complex calculations.

2. The Intelligence Layer: Planners and Chat History

For an AI to be more than just reactive, it needs a sense of logic and memory:

- Planners: The planner is the "brain" of the operation. When a user provides a complex goal, the planner analyzes all available plugins and functions. It then dynamically creates an execution plan, determining the optimal order in which to chain these tools together to fulfill the request.

- Chat History: Conversational context is what makes an AI feel intelligent. The Chat History system maintains a record of previous interactions. By injecting this history into new queries, the kernel ensures that the AI remains aware of the ongoing dialogue, allowing for follow-up questions and multi-turn reasoning.

3. Control and Governance: Filters and Execution Settings

Enterprise-grade applications require strict control over how AI behaves and how data flows:

- Filters: Filters act as a middleware layer for your AI logic. They can intercept and modify function invocations or prompt rendering. This is crucial for:

Security: Redacting sensitive information before it reaches the LLM.

Custom Logic: Adding logging or validation.

Behavioral Control: Overriding certain behaviors based on specific business rules.

- Execution Settings: These are the fine-grained configurations that dictate how the kernel handles queries. From specifying temperature and token limits to defining retry policies, execution settings ensure the kernel operates within the technical constraints of your infrastructure.